- #INSTALL SPARK UBUNTU HOW TO#

- #INSTALL SPARK UBUNTU INSTALL#

- #INSTALL SPARK UBUNTU UPDATE#

- #INSTALL SPARK UBUNTU ARCHIVE#

Libalgorithm-merge-perl libasan5 libatomic1 libbinutils libc-dev-bin libc6-dev libcc1-0 libcrypt-dev libctf-nobfd0 libctf0 libdpkg-perl libfakeroot The following additional packages will be installed:īinutils binutils-common binutils-x86-64-linux-gnu cpp cpp-9 dpkg-dev fakeroot g++ g++-9 gcc gcc-9 gcc-9-base libalgorithm-diff-perl libalgorithm-diff-xs-perl

#INSTALL SPARK UBUNTU INSTALL#

This can easily be done with the following command: :~$ sudo apt install build-essential Once the installation has been completed, all the basic tools for compiling and building packages must be installed.

#INSTALL SPARK UBUNTU UPDATE#

So, open a terminal and run the following commands: :~$ sudo apt update Upgrading the system completely is the first step in performing this tutorial.

#INSTALL SPARK UBUNTU HOW TO#

In Summary, you have learned steps involved in Apache Spark Installation on Linux based Ubuntu Server, and also learned how to start History Server, access web UI.Note: The screenshots are from version 2.3.7 but the post has been updated to the latest version which is 2.3.9. Run PI example again by using spark-submit command, and refresh the History server which should show the recent run. Starting .history.HistoryServer, logging to /home/sparkuser/spark/logs/.Īs per the configuration, history server by default runs on 18080 port. :~$ $SPARK_HOME/sbin/start-history-server.sh

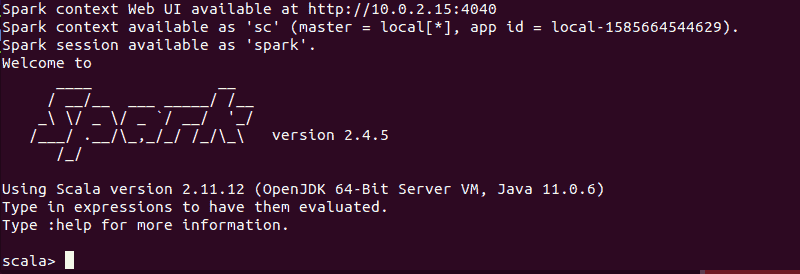

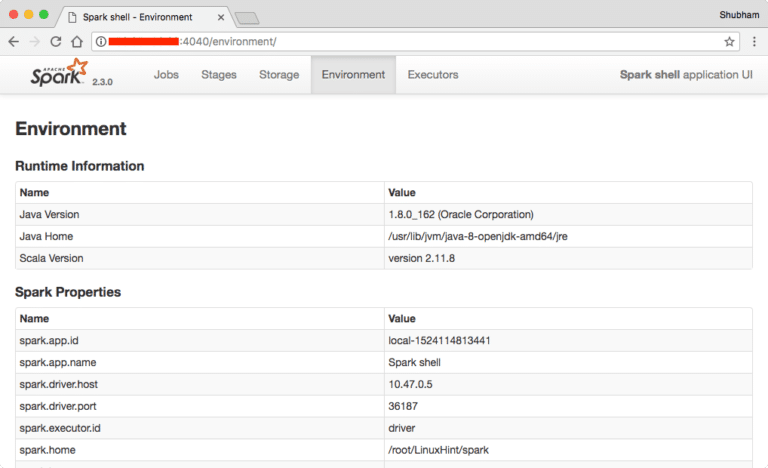

Run $SPARK_HOME/sbin/start-history-server.sh to start history server. Spark keeps logs for all applications you submitted. logDirectory file:///tmp/spark-eventsĬreate Spark Event Log directory. #Location from where history server to read event log Spark History server, keep a log of all completed Spark applications you submit by spark-submit, and spark-shell.Ĭreate $SPARK_HOME/conf/nf file and add below configurations. You can access by opening replace ip-address with your server IP. On Spark Web UI, you can see how the Spark Actions and Transformation operations are executed. Spark-shell also creates a Spark context web UI and by default, it can access from Spark Web UIĪpache Spark provides a suite of Web UIs (Jobs, Stages, Tasks, Storage, Environment, Executors, and SQL) to monitor the status of your Spark application, resource consumption of Spark cluster, and Spark configurations. Make sure you have Python installed before running pyspark shell.īy default, spark-shell provides with spark (SparkSession) and sc (SparkContext) object’s to use. In order to run PySpark, you need to open pyspark shell by running $SPARK_HOME/bin/pyspark. Note: In spark-shell you can run only Spark with Scala. This command loads the Spark and displays what version of Spark you are using. In order to start a shell to use Scala language, go to your $SPARK_HOME/bin directory and type “ spark-shell“. Spark-submit -class .SparkPi spark/examples/jars/spark-examples_2.12-3.0.1.jar 10Īpache Spark binary comes with an interactive spark-shell. You can find spark-submit at $SPARK_HOME/bin directory.

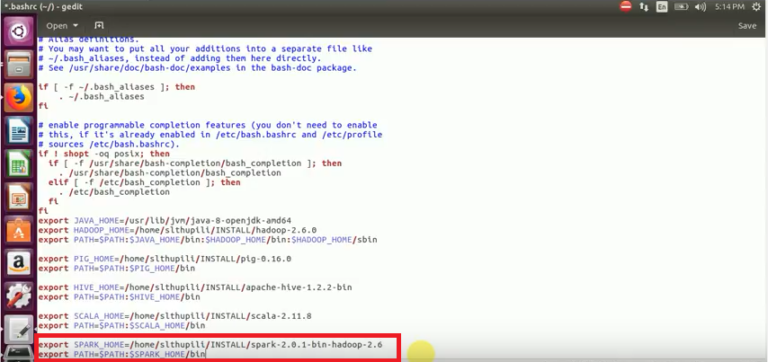

Here I will be using Spark-Submit Command to calculate PI value for 10 places by running .SparkPi example. Now let’s run a sample example that comes with Spark binary distribution. With this, Apache Spark Installation on Linux Ubuntu completes. profile file then restart your session by closing and re-opening the session. Now load the environment variables to the opened session by running below command :~$ source ~/.bashrc open file in vi editor and add below variables. Once untar complete, rename the folder to spark.Īdd Apache Spark environment variables to.

#INSTALL SPARK UBUNTU ARCHIVE#

Once your download is complete, untar the archive file contents using tar command, tar is a file archiving tool.